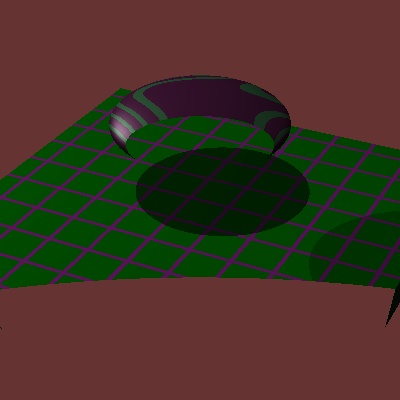

You need to do the inverse transform back into object space of intersection points.

Incrementally build up the inverse transformation matrix as you incrementally build the current transform matrix.

Pseudo-code for display procedure looks like (skipping over some details):

for each pixel

form ray

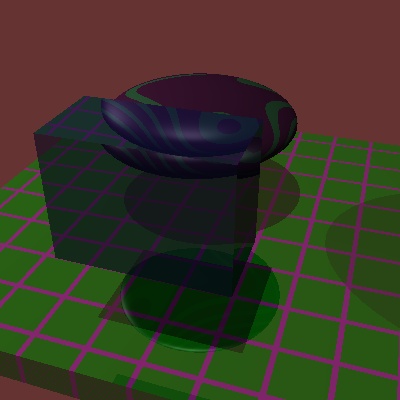

for each object

inverse transform ray (source point and direction) into object space

if (intersection of ray and object exists)

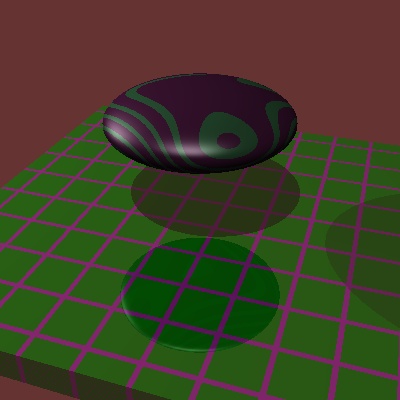

if (closest intersection so far) // compare in world space

transform point and normal back into world space

// besides intersection info, enough info to retrieve texture colors is also needed

record object, object space point, world point, world normal

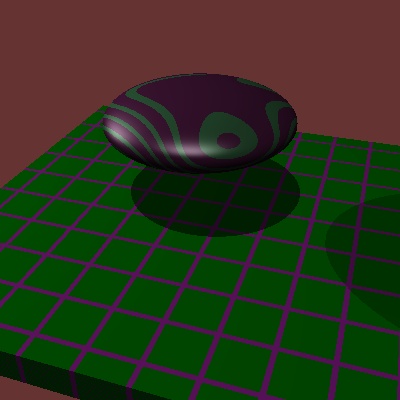

if closest object is textured

get texture color from object, texture and object space point

do illumination model using color, world point, world normal

record pixel color